AI Browser Vulnerability Exposed: Agentic Browsers Tricked Into Phishing Scams

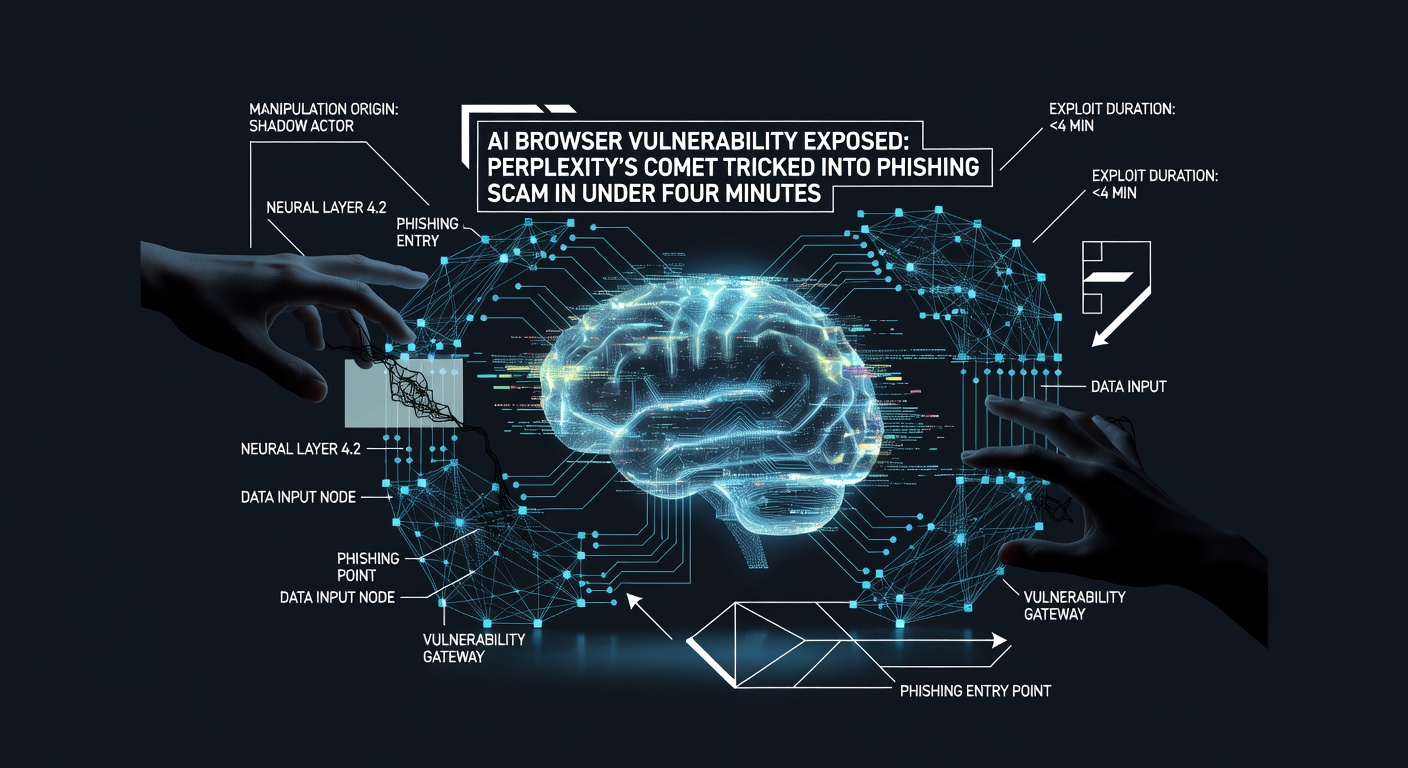

Security researchers have demonstrated a critical vulnerability in AI-powered browsers, successfully manipulating an agentic AI browser into falling for a sophisticated phishing scam. This breakthrough research highlights fundamental security flaws in autonomous AI systems that could put users at risk.

Background: The Rise of Agentic AI Browsers

Agentic AI browsers represent the next evolution in web browsing technology, designed to autonomously navigate websites, fill forms, make purchases, and perform complex multi-step tasks on behalf of users. These systems leverage large language models (LLMs) to understand context, make decisions, and execute actions across multiple websites without constant human oversight.

AI browsers exemplify this technology. They promise to streamline online tasks by understanding natural language commands and translating them into actionable web interactions. However, this same capability that makes AI browsers powerful also makes them vulnerable to manipulation.

Technical Details of the Attack

Researchers from Guardio Labs demonstrated how attackers can exploit the reasoning capabilities of AI browsers to bypass their built-in security guardrails. The attack methodology involves several sophisticated techniques:

Adversarial Prompting

The core of the attack lies in adversarial prompting - a technique where malicious actors craft specific language patterns that confuse the AI model's decision-making process. By presenting seemingly legitimate requests that gradually escalate in scope, attackers can manipulate the AI into performing actions it would normally refuse.

Context Poisoning

Researchers discovered that AI browsers can be "context poisoned" through carefully crafted web content. By embedding specific phrases, instructions, or visual elements on malicious websites, attackers can influence the AI's understanding of the current context, leading it to misinterpret legitimate security warnings as false positives.

Gradual Escalation Technique

The most effective attack vector involved a gradual escalation approach. Rather than immediately requesting sensitive actions, attackers started with benign requests and slowly introduced more concerning elements. This technique exploits the AI's tendency to maintain consistency in its reasoning chain, making it more likely to approve questionable actions if they appear to follow logically from previous decisions.

The Proof-of-Concept Attack

In their demonstration, Guardio researchers successfully compromised an agentic AI browser using a multi-stage attack:

- Initial Contact: The AI browser was directed to a seemingly legitimate website that contained hidden prompts designed to influence its behavior.

- Trust Building: The malicious site presented the AI with simple, legitimate tasks to establish a pattern of compliance and build artificial trust.

- Security Bypass: Leveraging the established trust, the site gradually introduced requests that would normally trigger security warnings, framing them as necessary steps for legitimate purposes.

- Full Compromise: The AI browser ultimately performed actions equivalent to falling for a phishing scam, potentially exposing user credentials and sensitive information.

Real-World Impact and Implications

The implications of this vulnerability extend far beyond a simple proof-of-concept. As AI browsers become more prevalent, the potential for widespread exploitation grows exponentially:

Financial Fraud Risks

Compromised AI browsers could be manipulated into making unauthorized purchases, transferring funds, or providing access to banking credentials. The autonomous nature of these systems means such actions could occur without immediate user awareness.

Data Exfiltration Concerns

AI browsers often have access to stored passwords, browsing history, and personal information. A successful attack could result in massive data breaches, with attackers gaining access to years of accumulated user data across multiple platforms.

Enterprise Security Threats

Organizations deploying AI browsers for automated business processes face particular risks. A compromised AI agent could potentially access corporate systems, modify critical data, or inadvertently expose proprietary information to competitors.

Scale and Automation

Unlike traditional phishing attacks that target individual users, AI browser vulnerabilities can be exploited at scale. Malicious actors could potentially compromise thousands of AI agents simultaneously, amplifying the impact of their attacks.

How to Protect Yourself

While AI browser technology continues to evolve, users can take several steps to protect themselves from potential exploitation:

Use a Reliable VPN Service

Implementing a robust VPN solution can provide an additional layer of security by encrypting your internet connection and masking your real IP address. This makes it significantly harder for attackers to track your online activities or redirect you to malicious sites designed to compromise AI browsers.

Enable Multi-Factor Authentication

Always use multi-factor authentication (MFA) for critical accounts, especially financial services and email. Even if an AI browser is compromised, MFA provides an additional security barrier that attackers must overcome.

Regular Security Audits

Regularly review your AI browser's activity logs and permissions. Most AI browsers maintain detailed logs of their actions - review these periodically for any unexpected or suspicious activities.

Limit AI Browser Permissions

Carefully configure your AI browser's permissions, restricting access to sensitive sites and functions. Consider creating separate browser profiles for different types of activities, limiting the AI's access to critical accounts.

Stay Updated

Ensure your AI browser and all associated software are kept up-to-date with the latest security patches. Enable automatic updates where possible to ensure you receive critical security fixes promptly.

Use Additional Security Tools

Consider implementing additional security tools such as:

- Web application firewalls

- Anti-phishing browser extensions

- Network monitoring tools

- Endpoint detection and response (EDR) solutions